Anthropic, the AI research firm known for its Claude series of AI models, has confirmed that it accidentally leaked 512,000 lines of source code related to its popular Claude Code CLI tool. This incident, attributed to a “release packaging issue caused by human error,” raised significant concerns in the tech community, as it may provide competitors and developers insight into Anthropic’s technology, although no sensitive customer data was compromised.

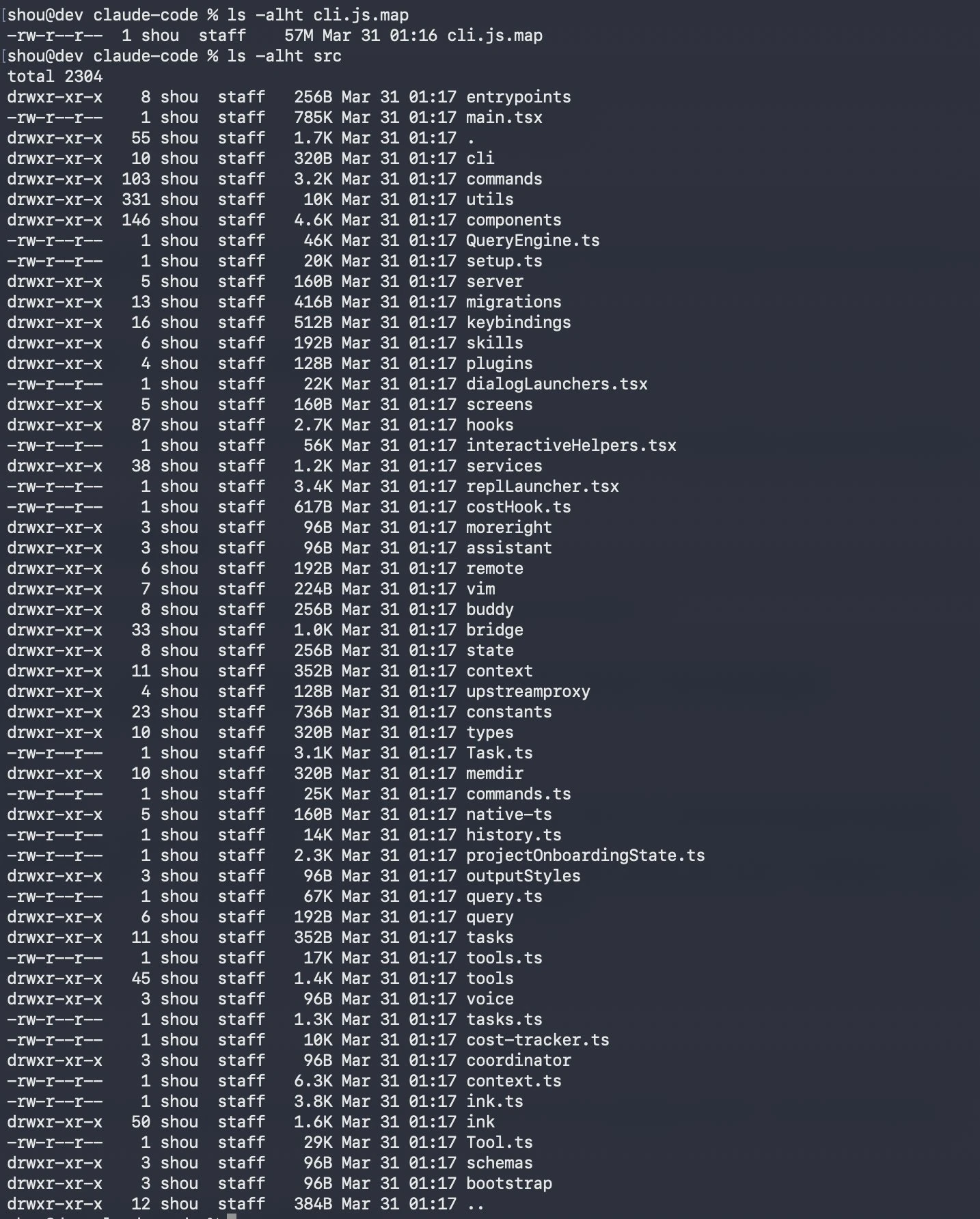

Yesterday, a debug/source nmap file was accidentally bundled into Claude Code v2.1.88 on npm, exposing roughly 512,000 lines across ~1,900 TypeScript files that describe the CLI’s orchestration, memory, tool loops, and agentic architecture. Anthropic characterized it as human “packaging error,” not a security breach; customer data was not leaked.

More realistically, however, there was likely no test cases or failed test cases from an AI vibe coded solution given that the sole issue was a misconfigured value standard for this type of deployment.

The leak occurred during the shipping of source maps in Claude Code version 2.1.88, revealing more than 1,900 internal files, including unfinished features like an unreleased “Tamagotchi Pet” and an “Always-On Agent.” Anthropic’s admission comes as it tests a new user interface for Claude Code amidst the fallout from the data exposure, prompting criticisms about the company’s data management practices.

Industry analysts and software developers have expressed concern over the implications of the leaked materials, with some suggesting that the incident underscored a basic oversight in code management protocols. Anthropic’s “Undercover Mode,” designed to prevent internal leaks, was also included in the exposition, raising eyebrows about the effectiveness of its security measures. Observers note that the company is facing scrutiny not only for the leak itself but also for its subsequent actions, including a flurry of DMCA takedown notices aimed at preventing the spread of the exposed code.

Another revelation about new products was that Anthropic will (eventually) launch its newest AI model, “Claude Mythos,” which has been described as a “step change” in AI capabilities. Researchers have warned that the model may present advanced cybersecurity threats, which have raised alarms given the context of the source code leak. As Anthropic navigates this challenging situation, the long-term reputational risks and competitive implications remain to be seen.