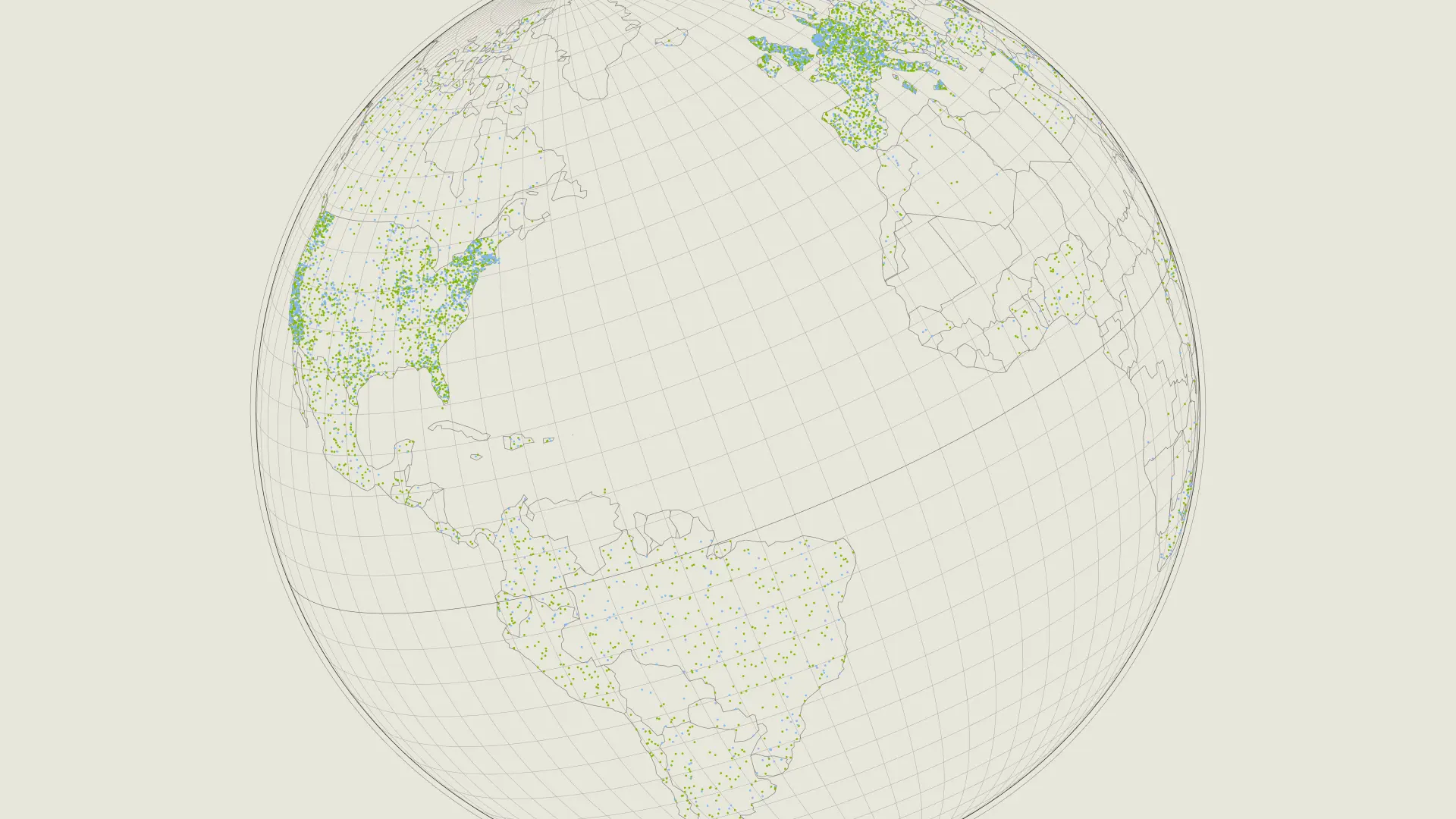

Anthropic, the artificial intelligence firm known for its AI chatbot Claude, has issued sweeping copyright takedown requests following an inadvertent leak of core code related to its Claude Code agency. Reports indicate the company has targeted over 8,100 GitHub repositories as part of its strategy to prevent potential copying and competition in the burgeoning AI market.

The takedown initiative has sparked irony-laden commentary across social media, as critics highlight the juxtaposition of Anthropic’s current copyright efforts against its advocacy for open-source principles. Many observers have criticized the firm, suggesting it appears to be wielding copyright protections selectively while previously promoting a less restrictive approach to AI development.

In a related development, CEO Dario Amodei has been in Australia, addressing local policymakers about the critical nature of trust and safety in AI. The timing of the leak and subsequent copyright strikes has led to criticisms that the company is failing to uphold its own standards while grappling with the fallout of the incident.

Ultimately, the private firm fervently cornering slivers of the market is navigating a true landmine. It needs to IPO badly in order to continue financing it’s ever increasing headcount while also protecting valuable IP and not running out of cash. There is a balancing the need to protect its intellectual property against the risk of increasing scrutiny and reputational damage from its actions. Some of the details included a pretty bare bones way to understand when a user was frustrated, like keyword searches with a regular expression module.

As the AI sector grows increasingly competitive, efforts to mitigate such leaks could become a pivotal focus for many firms. While the firm was adamant that this was actually human error, not an AI issue, there is some doubt to that. The misconfigured file could have just as easily been misconstrued by an AI in the final instances in which the commit was made public. That seems like the most likely scenario given the propensity for small hallucinations in distinct areas of a code repository.

Anthropic has committed a true blunder. As companies like Anthropic continue to expand their offerings, how they handle proprietary information versus public access will likely shape the discourse around AI for years to come.