In a rapid ascent, Anthropic’s AI chatbot, Claude, has climbed to the number two position in the U.S. App Store, just hours after the Department of Defense identified the company as a supply chain risk. This development has sparked noteworthy discussions around ethical AI and its implications. Claude’s popularity has seen it fluctuate between positions 20 and 50 for the duration of February, but it appears the controversy has significantly boosted its visibility among users.

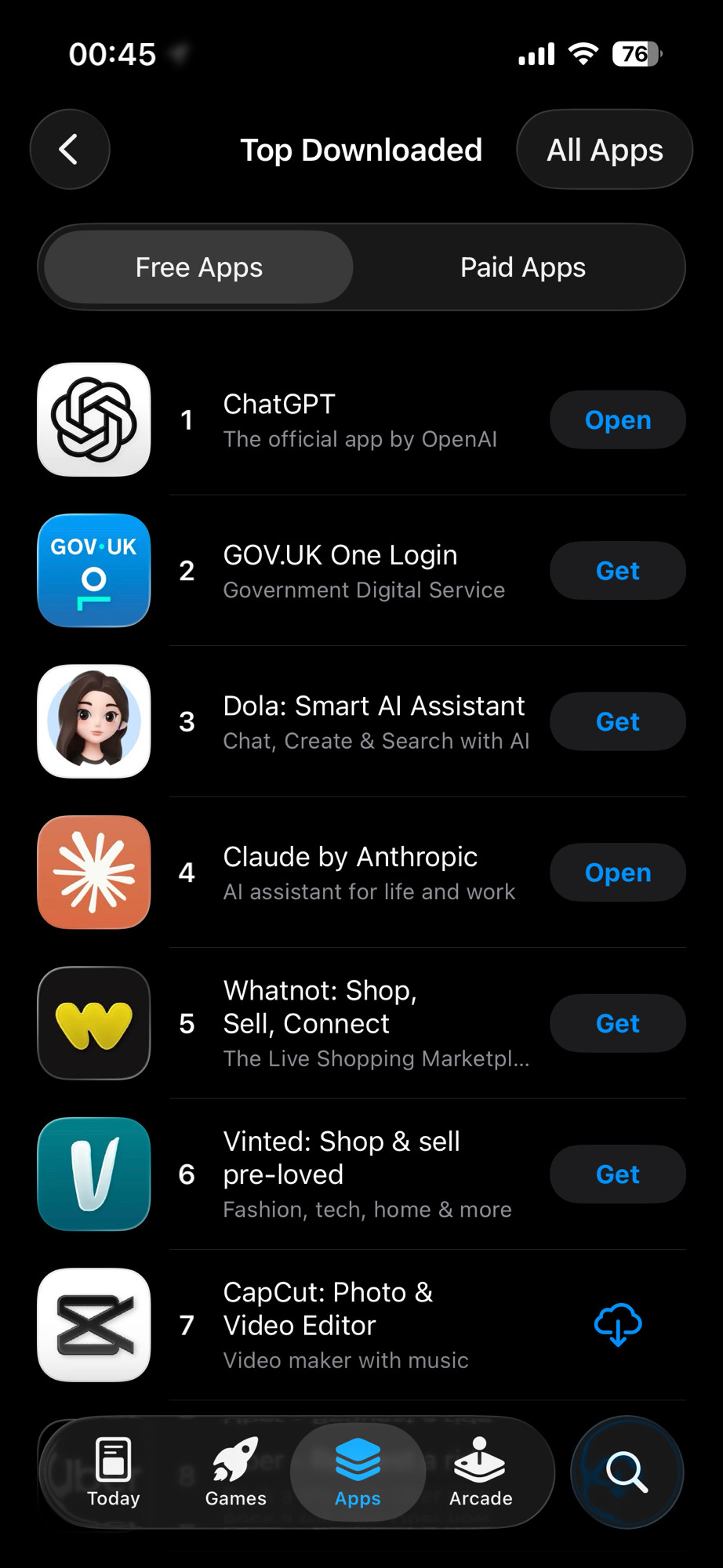

In the UK, Claude has also made a notable impact, ranking as the fourth most downloaded app. Observers are congratulating the app’s success, highlighting a potential shift in user preference as many long-time ChatGPT users transition to Claude, which offers a streamlined transition process for transferring user data and interactions.

Various reports underscore that Claude’s climb in the rankings aligns with a broader narrative about user trust in AI ethics, especially after its stance against cooperating with government surveillance initiatives. As noted by one user, Anthropic seems to stand out as “the only AI company with any moral compass.”

“Today [March 1st], Claude has achieved the top spot in both app stores, validating Anthropic’s user-centric approach during turbulent times,” another user remarked. Analysts speculate that the spike in downloads is a direct response to users rallying behind the company amid its conflict with government mandates.

Despite losing out on government contracts, which raises questions about its future dealings, Anthropic is evidently gaining significant traction in the consumer market. The app’s performance speaks volumes about the current consumer sentiment towards ethical business practices in AI development.